Discover essential guardrails for building safe and compliant AI sales systems. Learn how to ensure brand consistency, data privacy, legal compliance, and effective human-in-the-loop strategies to mitigate risks and empower your sales team.

Welcome back to our series recapping our webinar on inbound sales optimization. If you're just joining us, you can find our previous articles in this series to catch up on the conversation.

The webinar features Frank Sondors, the founder of Salesforge, a company that helps businesses build pipeline with AI-powered outbound tools, and Dom Chiarenza, our Head of Sales here at SalesApe, a solution that uses an AI sales agent to qualify and manage inbound leads.

So far, we've covered the importance of speed to lead, the ideal response times for different industries, the common human-centric bottlenecks that prevent efficiency, and a real-world case study. Now, we'll dive into one of the most important, and often overlooked, topics when it comes to AI: compliance and risk mitigation.

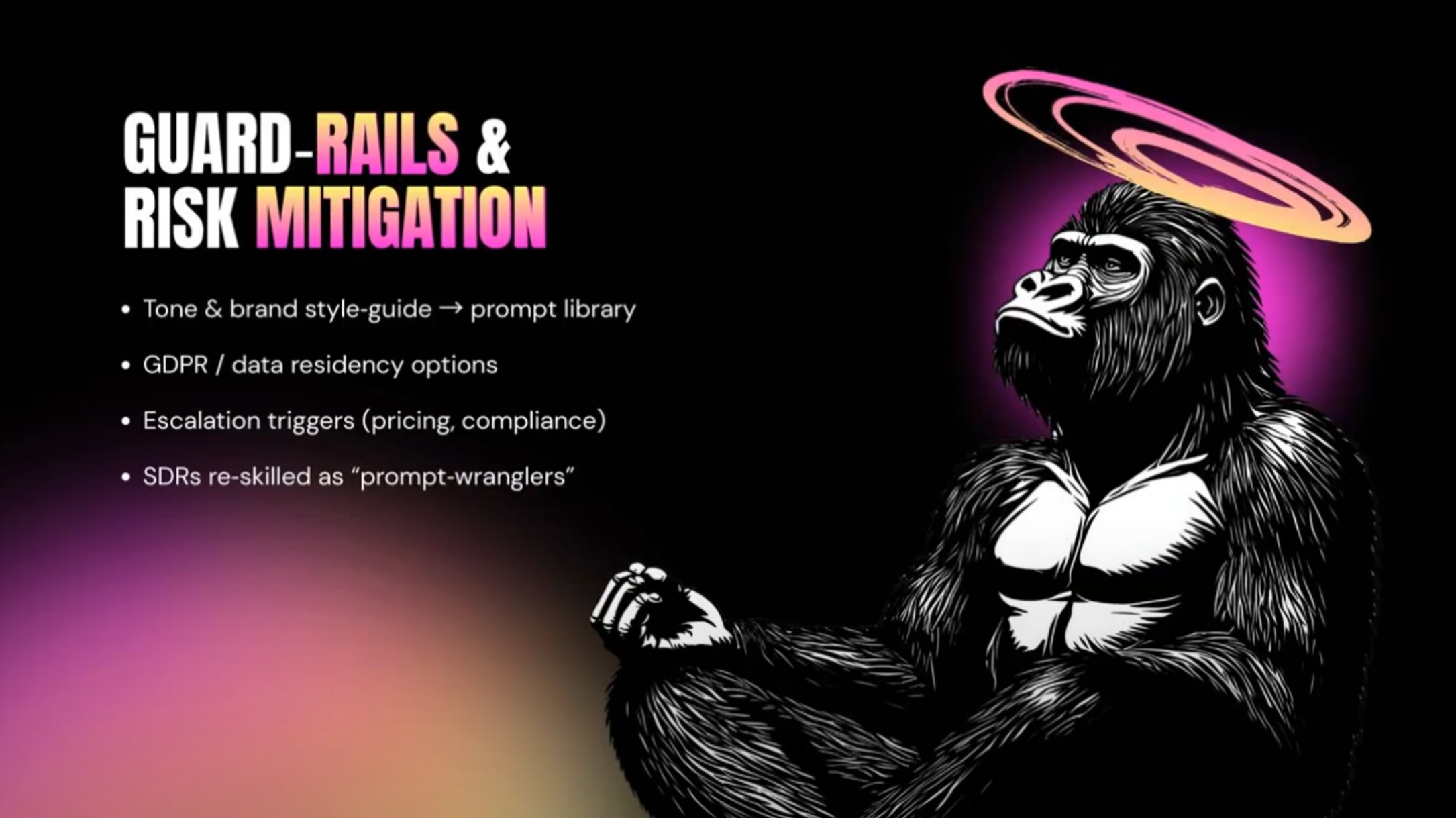

The promise of AI agents is huge, but it’s natural to wonder about the risks. What if the AI says something wrong? What about brand consistency, data privacy, or legal compliance? The good news is that the best AI sales solutions are built with these risks in mind, using specific "guardrails" to mitigate them. Implementing these safeguards is essential to building a reliable, scalable system that you can trust.

Your brand is more than just your identity, it’s your livelihood and the livelihood of all your employees, and in a world of data privacy, security and compliance, you can't afford to have an AI agent go rogue. The first step is to establish a clear brand style guide and tone of voice. This isn't just a simple set of rules; it's a prompt library that's translated into the AI. It ensures that every single interaction is on brand, consistent, and on point. The AI is trained to understand your company's voice, from being professional and personable to including subtle humor. This prevents the AI from sounding generic or saying things that don't align with your company's values.

In an age of GDPR and other data privacy regulations, compliance is non-negotiable. An AI solution must be compliant with local and international laws, especially for sensitive industries like finance and healthcare. The AI agent needs to be configured to handle data residency and protect personal information. Beyond that, you need to ensure the AI's data processing is secure and that all data is handled ethically, protecting you from potential legal penalties and reputational damage.

AI is powerful, but it's not a complete replacement for human interaction. It's a tool to augment your team. That’s why a crucial guardrail is setting up escalation triggers. This is your safety net. If a prospect asks about a very specific or complex topic, or if they get frustrated and say, "I need to speak with a human right now," the AI should know to immediately cut off and hand the conversation over to a person. The AI's job is to qualify and nurture, but it must be able to recognize when it's time to bring in a human expert.

The fear that AI will replace sales reps is a misconception. Instead, AI is redefining the role of the SDR, turning them into strategic thinkers. Think of your SDRs as "prompt wranglers" or "AI managers." They're the ones who train the AI, monitor its performance, and step in to handle the high-value, complex conversations. This shifts their focus from repetitive, low-impact tasks like chasing cold leads to more fulfilling, higher-impact work. By implementing these systems, you're not just improving your conversion rates; you're also empowering your team to achieve their full potential.

By building these guardrails into your AI system, you can ensure a reliable, scalable, and compliant operation that drives more conversions and helps you sleep better at night.

The key to maintaining brand integrity is a robust prompt library based on your company’s specific style guide and tone of voice. Modern AI agents are trained on these parameters to ensure every interaction is consistent with your values. By setting strict operational boundaries within the software, you can prevent the AI from sounding generic or providing information that falls outside of your approved messaging.

In the US, data privacy is governed by a patchwork of state laws, with California SB 243 and CPRA amendments setting the gold standard. To remain compliant, AI solutions are built to honor opt-out requests and provide transparency about what data is being collected. Leading AI agents also include specific guardrails for Automated Decision-Making Technology, giving consumers the right to access information about how the AI is processing their data and the ability to opt out of automated profiling.

A reliable AI system uses human-in-the-loop escalation triggers. If a prospect asks a highly technical question or expresses frustration, the AI is programmed to recognize these cues as a signal to stop and immediately hand the conversation over to a human expert. This ensures that the AI handles the high-volume qualification, while your team steps in for the high-nuance interactions.

Rather than replacing your team, AI redefines the SDR role. It shifts their focus away from repetitive, low-impact tasks like chasing cold leads and manual data entry. Your SDRs become strategic AI managers or prompt wranglers who monitor the system's performance and step in to close high-value deals. This allows your team to achieve their full potential by focusing on complex, fulfilling work.

A blended model provides a safety net that fully autonomous systems lack. By using AI to manage the initial "speed to lead" and basic qualification, you ensure no lead is ignored. However, keeping humans in the loop for oversight and complex decision-making ensures that the "human touch" is always available when a deal requires empathy, creative problem-solving, or sophisticated negotiation.