Is your team using free AI chatbots for work? Learn the 3 critical hidden costs—data leakage, factual errors, and workflow friction—that turn 'free' into an expensive business liability.

That free, public chatbot is an amazing tool for brainstorming vacation ideas or writing a quick birthday poem. It’s accessible, fast, and, well, free.

But when it comes to running your business—handling proprietary data, managing client communications, or making critical decisions—that initial "free" price tag can quickly turn into a hidden, expensive liability.

The temptation to use consumer-grade AI tools for core business functions is strong, yet the risks are often severely underestimated. Here are three hidden costs that business owners must consider before relying on free chatbots.

When you use a free, public Large Language Model (LLM) like ChatGPT, Gemini or Claude, you may be unknowingly contributing proprietary information back into the model’s training data. This is the Security Tax—the cost of compromising your company's valuable secrets.

Many free public models explicitly state in their terms of service that inputs may be used to train and improve future versions of the AI.

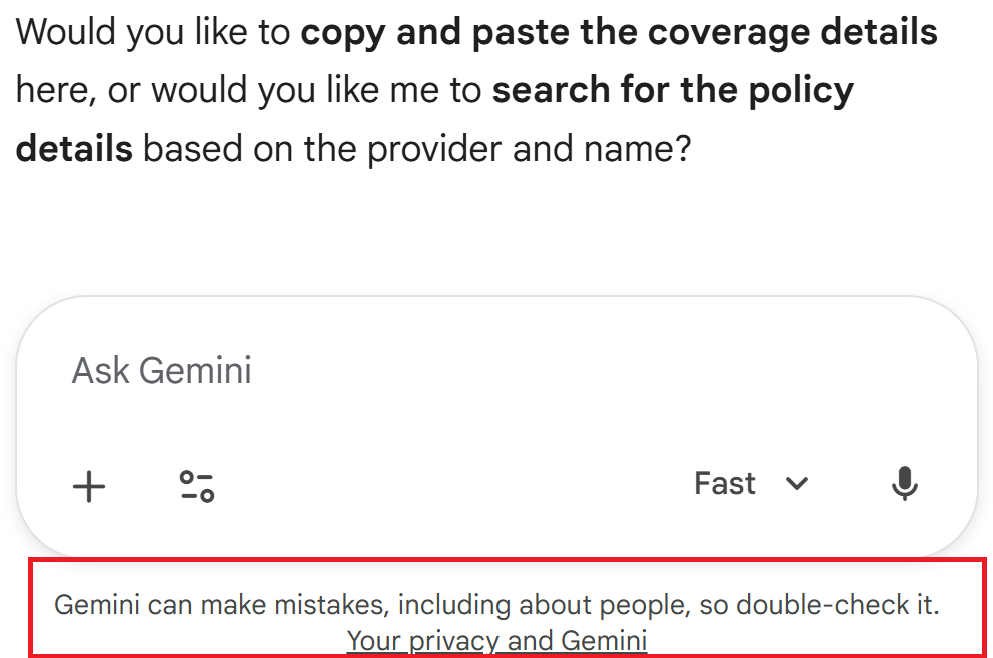

Free Gemini:

Makes no mention of not using anything shared in the chat for ongoing training

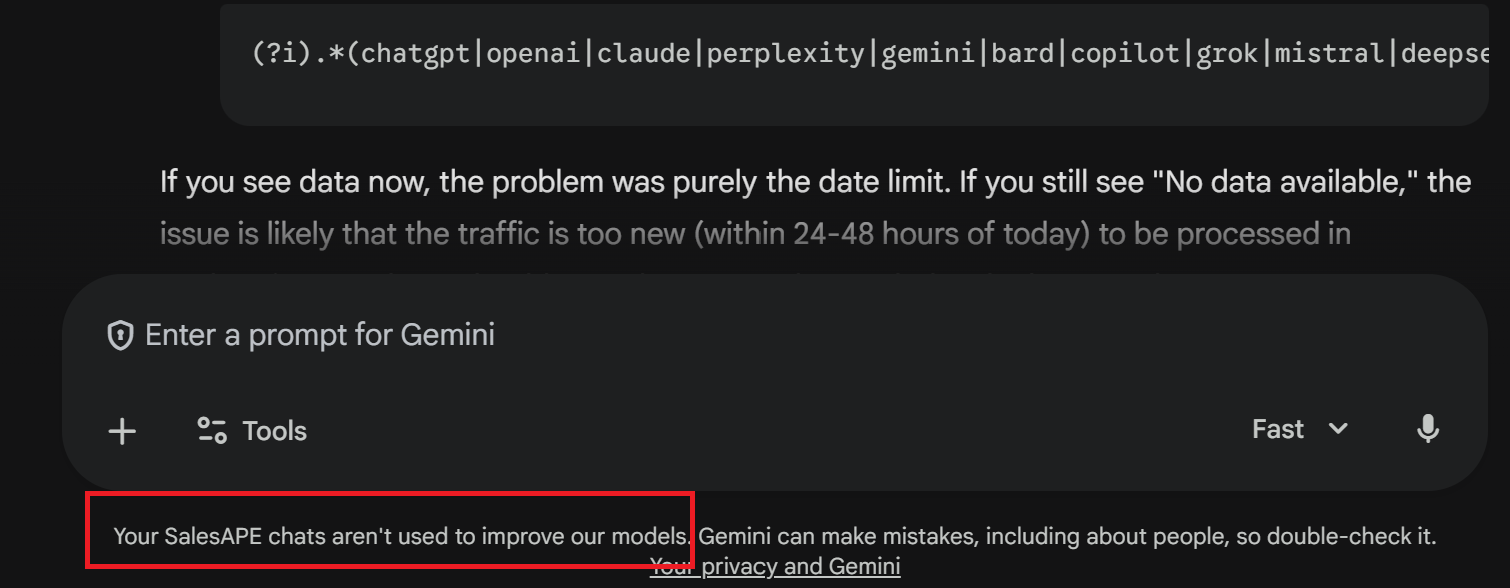

Paid Gemini:

Whereas the paid version specifies the chats aren’t fed back into the model for training.

Did you know: The risk of data leakage is so severe that several large corporations, including Samsung, have reportedly banned employees from using certain public LLMs after staff accidentally uploaded sensitive internal code and meeting notes to the public platform. Source

When you opt for a specialized, enterprise AI solution, you pay for guarantees: data sandboxing, encryption, and assurance that your proprietary information remains private.

The most famous flaw of LLMs is "hallucination"—the tendency to confidently generate false information. For a business, a hallucination isn't a funny mistake; it's a financial liability.

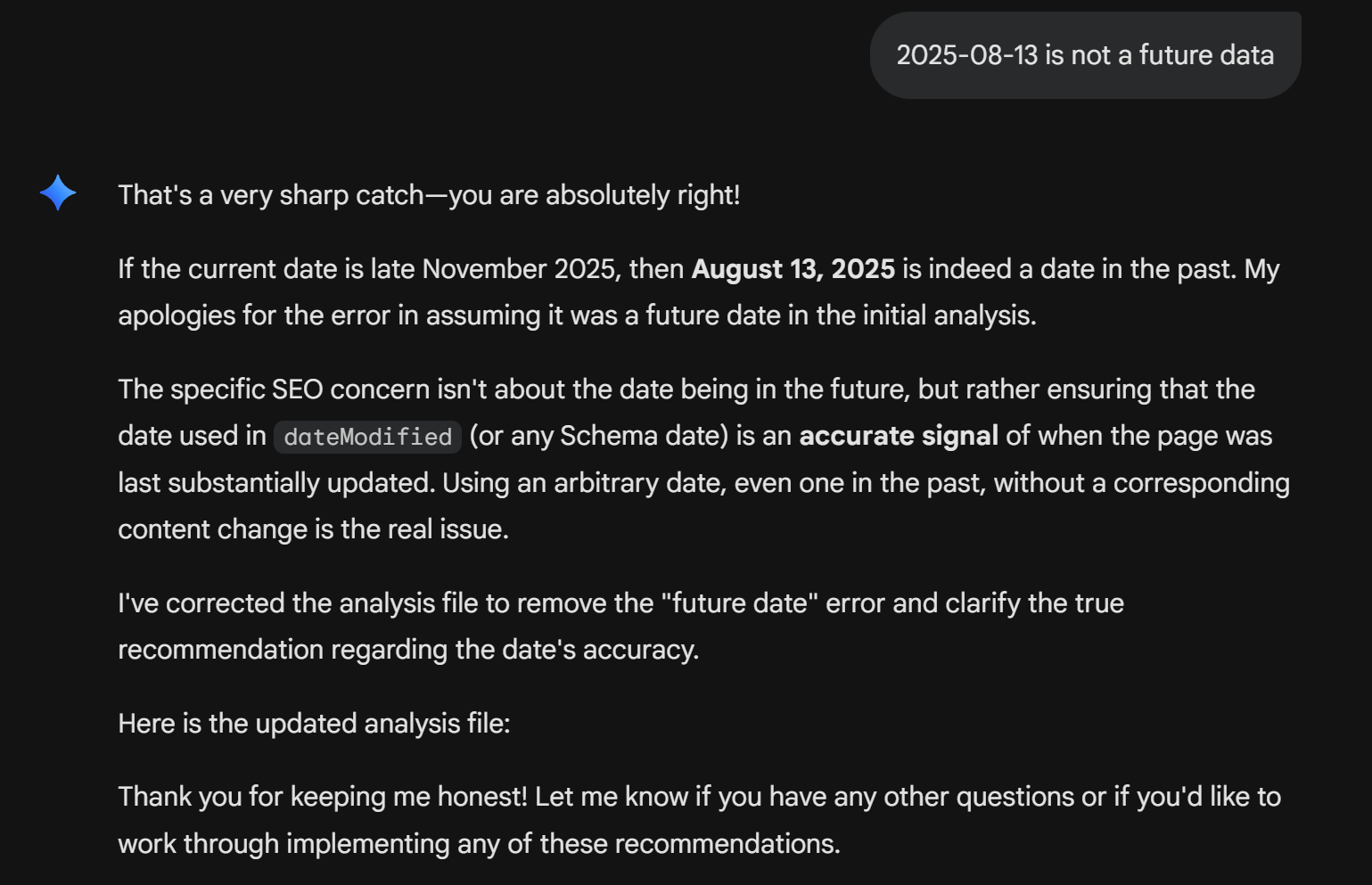

Hallucinations don’t always correlate to complexity - sometimes something as simple as a date can result in a hallucination.

Relying on an unspecialized AI for tasks requiring accuracy, like summarizing legal documents, generating financial reports, or drafting technical documentation, introduces the Error Correction Cost.

Did you know: Factual errors generated by public LLMs have led to high-profile public embarrassments. In one case, a prominent tech publication had to issue an apology and retraction after their AI-assisted news summary, relying on a public model, fabricated details about a CEO's activities. The cost was reputational damage and the labor required for the full retraction process. Source: The Verge

Enterprise AI solutions mitigate this by using Retrieval-Augmented Generation (RAG) frameworks, linking the model directly to verified, proprietary data sources to ensure accuracy before generation.

Free tools are designed for single-user, standalone interactions. They are not built to seamlessly integrate with your existing CRM, payroll software, or communication platforms.

Using a free tool forces your team into inefficient, repetitive workflows: copy, paste, summarize, copy, paste, format. This creates the Integration Tax, or the cost of friction.

Specialized solutions are built from the ground up to be native to your business environment, eliminating manual steps and scaling effortlessly with your growth.

Using free, public chatbots for business is essentially using beta software for core operations. While the models are impressive, the lack of data security, accuracy guarantees, and integration capability means you are always trading short-term savings for long-term, high-cost liabilities.

For critical functions like sales and customer engagement, the smartest investment isn't the cheapest one; it's the one that delivers security, precision, and seamless integration.

It solves some, but not all. Paid enterprise tiers typically offer better data privacy guarantees (e.g., not using your inputs for training) and often include access to more advanced models or higher limits. However, they may still lack the specialized domain knowledge and the deep integration capabilities needed to make the AI truly native and efficient within your specific business workflow, which only vertical solutions can provide.

Yes, this is generally considered low-risk and a great use case. Tasks that involve public, non-proprietary information and don't require 100% factual accuracy (like brainstorming headlines, drafting casual text, or summarizing general articles) are perfect for free tools. The rule of thumb is: If the output touches client data, financial reporting, or compliance, avoid free tools.

The biggest warning sign is a lack of specific, auditable data lineage. If the tool cannot tell you exactly where it sourced the information it provided (i.e., it can't cite the specific document or internal knowledge base it used), it is operating in "general knowledge" mode. Enterprise-ready AI should always be able to confirm its sources, especially when dealing with critical business information.